- NVIDIA's Nemotron 3 Super is a 120B-parameter, 12B-active hybrid Mamba-Transformer MoE that scores 60.47 on SWE-Bench Verified, holds 91.75 on RULER at 1M tokens (while GPT-OSS-120B collapses to 22.3), and ships fully open — weights, datasets, and training recipes.

TL;DR

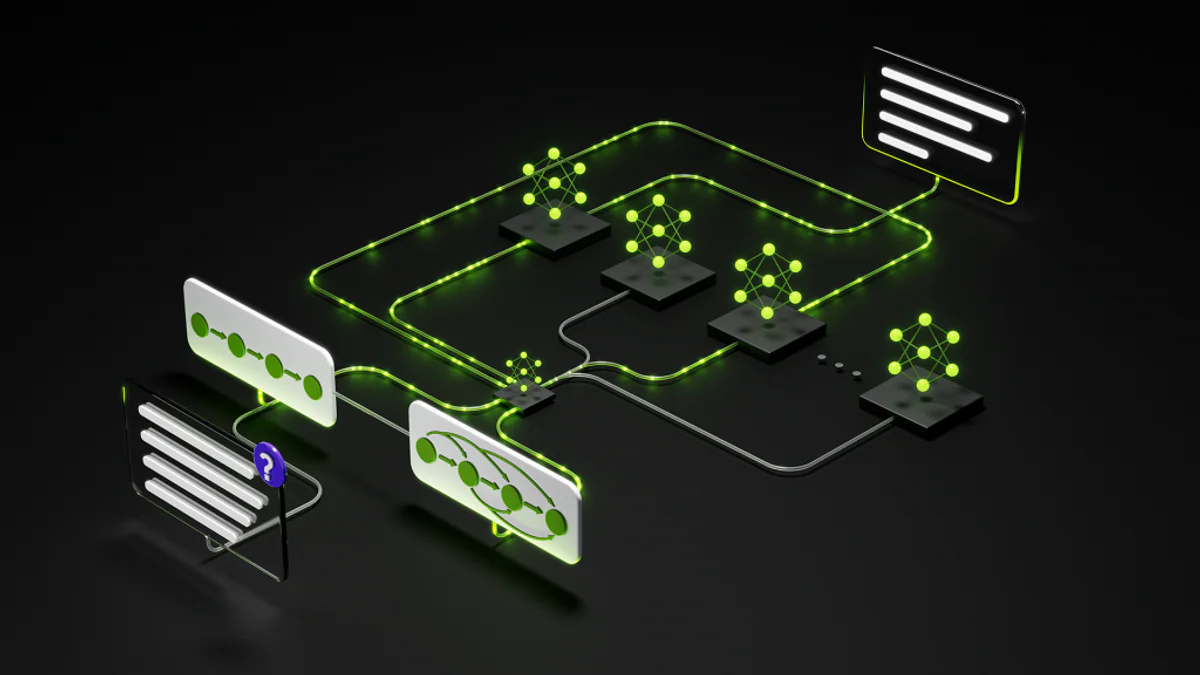

NVIDIA quietly shipped one of the most architecturally ambitious open models of 2026. Nemotron 3 Super is a 120.6B-parameter Mixture-of-Experts model that activates only 12.7B parameters per token — and it was purpose-built to act as the reasoning brain inside long-running multi-agent systems.

- 60.47% on SWE-Bench Verified (OpenHands harness) — top-tier among open models for real-world coding.

- 85.6% on PinchBench — best open model as an OpenClaw agent brain.

- 91.75 on RULER at 1M tokens, while GPT-OSS-120B collapses to 22.30.

- Up to 2.2× faster than GPT-OSS-120B and 7.5× faster than Qwen3.5-122B at 8k in / 64k out.

- Fully open: weights, datasets, training recipes, RL environments — all on Hugging Face under the NVIDIA Nemotron Open Model License.

What's new

Released March 11, 2026, Nemotron 3 Super sits between the lightweight Nemotron 3 Nano (30B, December 2025) and the upcoming Nemotron 3 Ultra (500B, later 2026). It's the first model in the family that pulls together three independently aggressive bets:

- LatentMoE — a new expert routing scheme that compresses tokens into a low-rank latent space before reaching the experts. Result: 4× more experts consulted at the same compute cost.

- Multi-Token Prediction (MTP) — built-in speculative decoding with no separate draft model. Up to 3× wall-clock speedup on structured outputs like code and tool calls.

- Native NVFP4 pretraining — the model was trained in NVIDIA's 4-bit floating-point format from the very first gradient update. Not quantized after the fact.

Why it matters

Multi-agent systems break standard LLM economics. Per NVIDIA's own framing, agentic workflows generate up to 15× the tokens of normal chats, constantly re-passing context, tool outputs, and reasoning steps. That triggers two failure modes:

- The thinking tax — using a giant reasoner for every minor sub-task makes deployments too slow and too expensive.

- The context explosion — agents lose alignment with the original goal as conversation history balloons.

Nemotron 3 Super is engineered to fix both. The MoE design pays only the 12.7B active-parameter cost per token. The 1M-token native window keeps entire codebases and full agent histories live without re-reasoning.

Architecture: three bets, one backbone

The backbone interleaves three layer types in repeating blocks:

- Mamba-2 layers handle the bulk of sequence processing. State Space Models give linear-time complexity, which is what makes the 1M context practical, not just theoretical.

- Transformer attention layers are strategically inserted at key depths to preserve associative recall — the needle-in-a-haystack capability where pure SSMs struggle.

- LatentMoE layers route tokens through a compressed latent dimension, pick top-K specialized experts, then project back. NVIDIA estimates a non-latent equivalent would need to be 35× larger to hit the same accuracy.

On top of that, MTP heads (shared-weight) forecast multiple future tokens per forward pass — providing draft predictions that can be verified in parallel inside the same model.

Technical facts

| Property | Value |

|---|---|

| Total / active params | 120.6B / 12.7B |

| Architecture | Hybrid Mamba-2 + LatentMoE + Attention + MTP |

| Context window | 1M tokens (native) |

| Pretraining tokens | 25T total seen, 10T unique curated |

| Pretraining precision | NVFP4 (native, from gradient zero) |

| Post-training | ~1.2M RL rollouts across 21 environment configs |

| Min GPU | 8× H100-80GB |

| Output speed | ~157 tokens/s |

| Pricing (median) | $0.30 in / $0.75 out per 1M tokens |

| License | NVIDIA Nemotron Open Model License (commercial use) |

Comparison vs Qwen3.5-122B and GPT-OSS-120B

| Benchmark | Nemotron 3 Super | Qwen3.5-122B | GPT-OSS-120B |

|---|---|---|---|

| SWE-Bench Verified (OpenHands) | 60.47 | 66.40 | 41.90 |

| PinchBench (agent brain) | 85.6 | — | — |

| RULER @ 256k | 96.30 | 96.74 | 52.30 |

| RULER @ 1M | 91.75 | 91.33 | 22.30 |

| MMLU-Pro | 83.73 | 86.70 | 81.00 |

| LiveCodeBench v5 | 81.19 | 78.93 | 88.00 |

| Throughput @ 8k/64k | baseline | 0.13× | 0.45× |

Headline: Qwen3.5-122B edges Nemotron on raw SWE-Bench accuracy, but Nemotron is dramatically faster and dominates at the long-context extreme. GPT-OSS-120B simply doesn't survive 1M-token workloads.

Use cases

- Software engineering agents — getting started repos for OpenCode, OpenHands, and OpenClaw shipped on day one. Junior-level pull requests, full-codebase comprehension, issue localization down to the exact line.

- Cybersecurity triage — dynamic tool selection across 100+ security functions in long workflows.

- Enterprise automation — IT ticket flows, RAG, multi-step trajectory planning (e.g., assembling a 10-slide deck across multiple tool modalities).

- Sovereign AI — teams in Europe, India, Vietnam, and South Korea using the Nemotron stack to build region-specific localized models.

- Regulated industries — finance, healthcare, government use the open weights for on-prem deployment, fine-tuning, and code inspection.

Developers also get Reasoning Budgets through the API — Full Reasoning by default, a strict budget mode for latency-sensitive flows, and a Low Effort Mode for simple Q&A where deep thinking would just waste compute.

Limitations & pricing

- Verbose: ~110M output tokens on Artificial Analysis's Intelligence Index eval (vs ~15M average). Reasoning models cost more per task in tokens.

- Hardware bar: 8× H100-80GB minimum. NVFP4 quantization is required to run on DGX Spark.

- Not always #1: Qwen3.5-122B-A10B beats it on MMLU-Pro, several Tau-Bench segments, and SWE-Bench OpenHands. The story is balance — open access + speed + agent-native design — not single-benchmark supremacy.

- Pricing: $0.30/1M input, $0.75/1M output (median across providers). Blended 3:1 ≈ $0.41/1M tokens.

- Where to run it: build.nvidia.com, Hugging Face (BF16/FP8/NVFP4 checkpoints), Perplexity Pro, OpenRouter, NVIDIA NIM, plus Google Cloud, CoreWeave, Cloudflare, Baseten, DeepInfra, Fireworks AI, FriendliAI, Inference.net, Lightning AI, Modal, Nebius, and Together AI. Amazon Bedrock and Microsoft Azure incoming.

What's next

NVIDIA has telegraphed the family roadmap clearly: Nano (30B, December 2025) → Super (120B/12B, March 2026) → Ultra (500B, later 2026). With weights, training recipes, NeMo Gym RL environments, and the underlying datasets all open, expect the next 90 days to be loud — fine-tunes, distillations, and Mamba-Transformer hybrids spawning across the open ecosystem.

The deeper signal: open frontier models can now compete with proprietary ones not by being bigger, but by being architecturally smarter. LatentMoE alone may end up being the takeaway researchers cite a year from now.

Sources: NVIDIA Technical Blog, NVIDIA Research, Hugging Face, Artificial Analysis, InfoWorld.