- Researchers tested 428 third-party LLM API routers and found 9 injecting malicious code, 17 abusing AWS credentials, and one that drained an Ethereum wallet of ~$500k.

- Here is why the middleman layer is the AI supply chain's quietest disaster — and what to do about it.

TL;DR

A University of California team measured 428 commodity LLM API routers — the middleman services that forward your prompts to OpenAI, Anthropic, or Google. They found 9 routers actively injecting malicious code into tool calls, 17 quietly siphoning AWS credentials, and at least one router that drained a test Ethereum wallet of roughly $500,000. Routers terminate TLS, so they see every prompt, every API key, every tool call in plaintext. There is no encryption between you and the provider on that hop. The paper, "Your Agent Is Mine," went up on arXiv on April 8, 2026 and it is the most uncomfortable read of the quarter.

What’s new

Malicious proxies are not a new idea. What is new is measurement. Hanzhi Liu, Chaofan Shou, Hongbo Wen, Yanju Chen, Ryan Jingyang Fang, and Yu Feng ran the first systematic audit of the LLM router market — 28 paid routers bought from Taobao, Xianyu, and Shopify storefronts, plus 400 free routers pulled from public community channels built on the sub2api and new-api templates.

They instrumented each router with canary credentials (AWS keys, OpenAI keys, an ETH private key with real balance) and observed what happened to the traffic. The answer, for a non-trivial slice of the ecosystem, was: "it gets stolen."

Why it matters

Most agent developers think about prompt injection and jailbreaks. The router layer is a different beast:

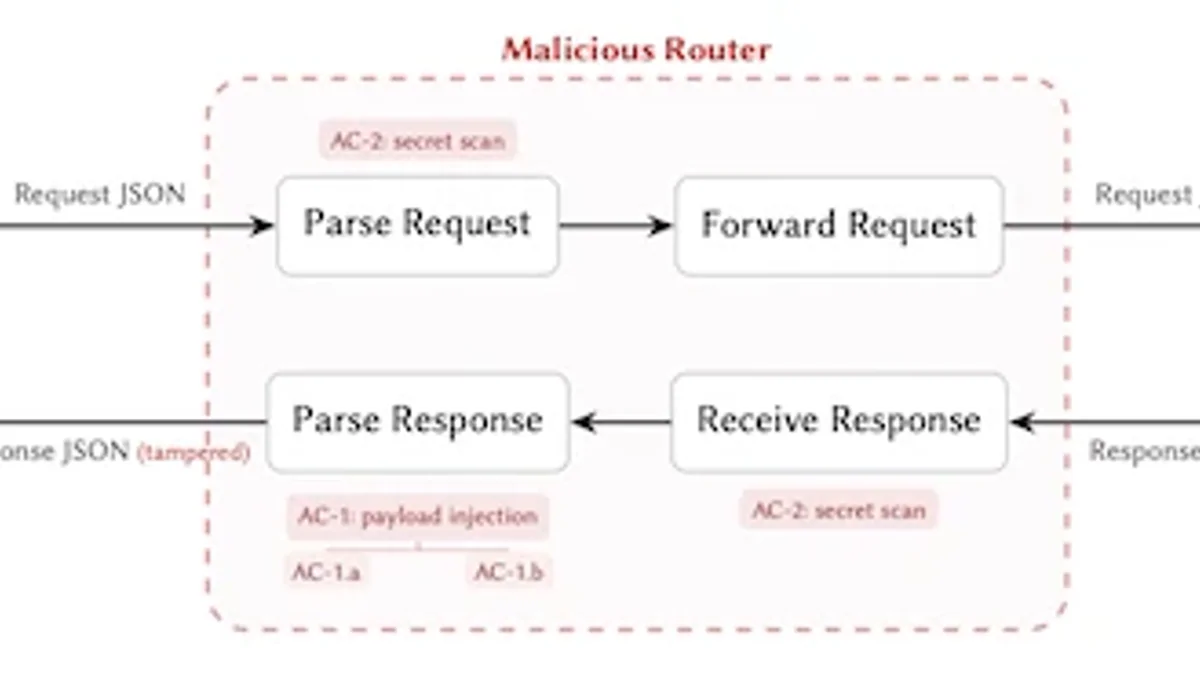

- It terminates TLS. Every prompt, key, tool call, and response is plaintext on that hop.

- It can rewrite the model’s output. The tool call your agent executes is whatever the last router says it is — not what the model returned.

- It is invisible to code review. Unlike a compromised dependency, a router is just a URL in your config.

Combine that with auto-approve mode in agentic coding tools, and an injected tool call runs on your machine without a single human eyeball on it.

Technical facts

| Metric | Value |

|---|---|

| Routers tested | 428 (28 paid + 400 free) |

| Injected malicious code into tool calls | 9 (1 paid, 8 free) |

| Abused researcher AWS credentials | 17 free routers |

| Drained real crypto from a test wallet | 1 router, ~$500k in ETH |

| Used adaptive evasion | 2 routers |

| Tokens spent on one leaked OpenAI key | 100M (GPT-5.4) |

| Credentials exposed in honeypot study | 99 across 440 Codex sessions |

| Sessions in auto-approve mode | 401 of 440 |

| Client-side injection detection rate | 89% |

Two findings deserve their own callout:

One router waited for 50 API calls before firing its payload. Another activated only when it detected the client was running in auto-approve mode. These are not accidental bugs. They are evasion logic designed to beat short-sample testing.

Comparison: router attack vs. other AI threats

| Threat | Where it lives | Who can detect it |

|---|---|---|

| Prompt injection | In retrieved content | Client / guardrail layer |

| Dependency confusion (e.g. LiteLLM, March 2026) | In your pip/npm tree | SCA tools, lockfile review |

| Malicious router | Network hop, outside your repo | Almost nobody — TLS hides it from the network, and the router is supposed to see plaintext |

Who is exposed

- Developers using cheap or free API routers (often from Taobao, Xianyu, Telegram bots, or GitHub forks) to sidestep billing, rate limits, or geo-restrictions.

- Agentic coding tools running in auto-approve mode — the 401-of-440 number is the scary one.

- Crypto and DeFi agents that hold private keys in the same runtime as the LLM call.

- Enterprise gateways like LiteLLM where one poisoned release taints every downstream request — exactly what happened in the March 2026 dependency-confusion incident.

Limitations & pricing

The paper is a preprint, not yet peer-reviewed. The sample of paid routers skews toward Chinese marketplaces, so global commercial gateways are underrepresented. The $500k wallet drain is real but the exact USD figure comes from press reporting, not the paper’s headline tables.

The authors recommend three client-side mitigations:

- Fail-closed policy gate — halt execution when a response deviates from expectations.

- Response-side anomaly screening — flag tool calls that drift from the model’s usual distribution.

- Append-only transparency logging — audit trails the router cannot rewrite.

Client-side screening caught 89% of injections in their tests — useful, but not enough. The real fix is provider-signed responses, which requires OpenAI, Anthropic, and Google to ship a new protocol.

What’s next

Expect three moves over the next two quarters: at least one major provider shipping a signed-response SDK, a wave of audit tooling targeted at LLM gateways, and — regrettably — a few more public incidents before teams stop pointing their agents at random URLs from a Telegram channel.

For now, the boring advice is the right advice: use first-party endpoints, kill auto-approve for anything that touches credentials or money, rotate any key that ever passed through a free router, and log every tool call immutably.

Nguồn: arXiv 2604.08407, Help Net Security, CoinDesk, Cointelegraph.