- A 1.5B audio model (LFM2.5-Audio) and a 1.2B tool-calling model (LFM2-1.2B-Tool) drive a real-time, voice-controlled car cockpit demo entirely on-device.

- No internet, no API keys, no cloud.

- Here's what's actually inside.

TL;DR

Liquid AI quietly shipped a voice-controlled car cockpit demo where everything runs locally on a laptop — speech recognition, intent understanding, tool calls, and TTS responses. Two open-weight models do the work: LFM2.5-Audio-1.5B for native speech-in / speech-out, and LFM2-1.2B-Tool for dispatching actions to the cockpit. No internet. No API keys. No cloud round-trip. The whole thing is in their cookbook repo and runs via llama.cpp on macOS ARM, Ubuntu, and WSL2.

What's new

Most voice assistants you have used — Alexa, Siri, Google Assistant — pipe your audio to a server, transcribe it, run an LLM, synthesize a reply, and stream it back. Even the "on-device" ones often fall back to cloud for the actual reasoning step.

Liquid AI's audio-car-cockpit example flips the script. The cockpit UI is plain JS/HTML/CSS in a browser; the backend is a Python server talking to the UI over WebSocket — Liquid describes it as "a widely simplified car CAN bus". Behind that bus, two models handle the full speech-to-action loop:

- LFM2.5-Audio-1.5B — a native audio-language model. It does ASR and TTS in the same network, in either interleaved mode (text + audio tokens streamed together for low time-to-first-audio) or sequential mode (clean STT or TTS).

- LFM2-1.2B-Tool — a Nano model from Liquid's specialized family, fine-tuned for tool calling. It reads the user's intent and emits structured calls that flip cockpit functions on the bus.

Both ship as GGUF checkpoints and run via llama.cpp, with a custom runner for the audio pipeline.

Why it matters

Three things are interesting here, and they apply far beyond cars:

- Privacy by construction. Cabin audio never leaves the device. For an OEM, that removes an entire class of data-handling and consent problems.

- Works in dead zones. Tunnels, rural roads, planes, basements. The assistant degrades to zero when the network does — currently the default for every cloud assistant.

- No per-request cost. Inference is just CPU time. There is no API meter ticking on every "turn down the AC".

The same constraints — privacy, offline, zero-marginal-cost — apply to robotics, IoT appliances, embedded medical devices, and any app where a cloud round-trip is either unsafe or just embarrassingly slow.

Technical facts

The audio model is the headline. LFM2.5-Audio-1.5B is built on a 1.2B hybrid conv+attention LM backbone, a 115M FastConformer audio encoder, an RQ-transformer, and a brand-new compact audio detokenizer. Liquid says the new detokenizer is 8× faster than the previous Mimi-based one at the same precision on a mobile CPU, and was quantization-aware-trained at INT4 so it deploys at low precision with almost no quality loss.

| Property | Value |

|---|---|

| Total parameters | 1.5B (1.2B LM + 115M encoder + heads) |

| Context | 32,768 tokens |

| Vocab | 65,536 text / 2049×8 audio (8 Mimi codebooks) |

| Native precision | bfloat16; INT4 via QAT |

| License | LFM Open License v1.0 |

| Inference | llama.cpp GGUF, MLX, vLLM, ONNX |

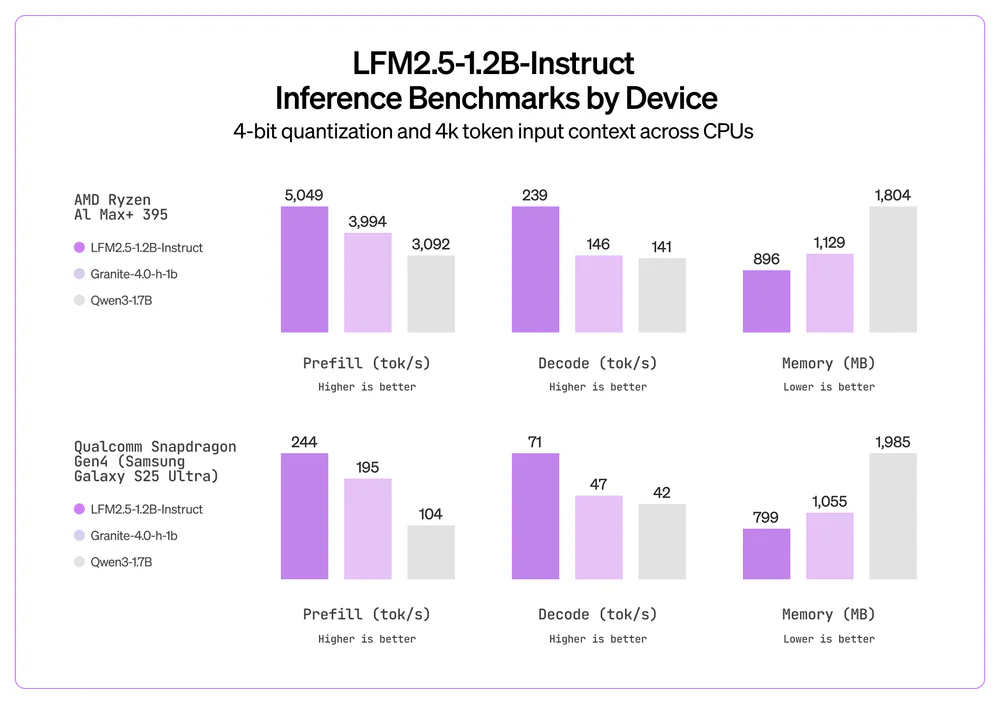

And the LM that drives the cockpit — LFM2-1.2B-Tool — is part of Liquid's "Nano" family of small specialists. It runs in roughly 719–856 MB on a laptop or phone CPU at Q4_0, and ~0.9 GB on a Snapdragon NPU. On an AMD Ryzen AI 9 HX 370 CPU it pushes about 2,975 prefill / 116 decode tokens/sec; on a Snapdragon Gen4 NPU it hits 4,391 prefill / 82 decode tokens/sec. On a Galaxy S25 Ultra CPU you still get 70 decode tok/s in 719 MB — vs Qwen3-1.7B at 40 tok/s in 1,306 MB on the same chip.

Comparison

The numbers that matter for an audio model are VoiceBench (overall conversational quality) and ASR Word Error Rate (transcription accuracy). LFM2.5-Audio-1.5B holds up surprisingly well against models 3–5× larger, and is basically tied with Whisper-large-V3 on transcription while also being able to talk back.

| Model | Size | VoiceBench (overall) | ASR WER avg (lower is better) | Speaks? |

|---|---|---|---|---|

| LFM2.5-Audio-1.5B | 1.5B | 54.92 | 7.53 | Yes |

| LFM2-Audio-1.5B (prev) | 1.5B | 52.77 | 9.38 | Yes |

| Whisper-large-V3 | 1.5B | — | 7.44 | No (ASR only) |

| Qwen2.5-Omni-3B | 5B | 63.57 | 7.90 | Yes |

| Moshi | 7B | 29.51 | — | Yes |

Translation: at 1.5B params, LFM2.5-Audio matches Whisper on transcription, beats a 7B Moshi on conversational quality, and trades a little VoiceBench score to Qwen2.5-Omni-3B but uses ~30% the parameters and actually fits on a phone.

Use cases

The cockpit is a showcase, not the ceiling. The same two-model pattern (audio for I/O, Nano-Tool for dispatch) works almost anywhere you want a hands-free interface that has to keep working without a network:

- Automotive HMI — climate, media, navigation, drive modes; latency-sensitive, privacy-sensitive, dead-zone-prone.

- Smartphone copilots — sub-1 GB memory budgets fit comfortably alongside the OS on a flagship Snapdragon.

- IoT and wearables — Qualcomm Dragonwing IQ9 NPU runs the LM at 0.9 GB and ~53 decode tok/s, enough for an always-on local assistant in an appliance or kiosk.

- Robotics — local intent + tool dispatch removes the cloud round-trip from the control loop.

- Regulated enterprise — healthcare, finance, defense; voice control inside the firewall via Liquid's on-prem inference + customization stack.

Limitations & pricing

A few real caveats:

- English only for LFM2.5-Audio-1.5B today. The text family has a Japanese variant (LFM2.5-1.2B-JP); the audio model does not yet.

- 32K context. Plenty for a driving session, not for long-document QA.

- Demo platforms: macOS ARM64, Ubuntu ARM64, Ubuntu x64, Ubuntu WSL2. Native Windows is not on the list. Building from source needs

cmakeand a C++ toolchain (or you can symlink an existingllama-server). - Pricing. Open weights, free to download, fine-tune, and ship — available on Hugging Face, Liquid's LEAP platform, the Liquid Playground, and Amazon Bedrock. The on-prem enterprise inference + customization stack is paid; pricing is not public, sales contact only.

What's next

LFM2.5 launched on January 5, 2026. Liquid has said publicly they will grow the LFM2.5 family across new sizes and reasoning capabilities, and that their commercial focus stays on edge and on-prem deployments — automotive, consumer electronics, healthcare, robotics. Expect more Nano specialists like LFM2-1.2B-Tool for narrow, well-shaped jobs, and likely a multilingual audio model to follow.

If you want to try the cockpit yourself, the cookbook README is the fastest path: make setup && make LFM2.5-Audio-1.5B-GGUF LFM2-1.2B-Tool-GGUF && make -j2 audioserver serve.

Sources: Liquid AI blog, Liquid4All/cookbook, LFM2.5-Audio-1.5B on Hugging Face, Liquid Docs.