- Hugging Face Transformers now supports SAM-3 Lite-Text — a distilled MobileCLIP student that replaces SAM-3's heavy CLIP ViT-L/14 text encoder, cutting parameters from 353.72M to 42.54M while keeping vision-language segmentation quality intact.

TL;DR

Hugging Face Transformers just added SAM-3 Lite-Text, a drop-in replacement for SAM-3's text encoder that is ~88% smaller with comparable quality. The heavy CLIP ViT-L/14 encoder (353.72M params) is swapped for a compact MobileCLIP student trained via knowledge distillation — 42.54M params in the smallest variant. Image and video vision-language segmentation keep working; you just load a lighter model.

What's new

The integration was announced by Niels Rogge on X. It ports the research from SAM3-LiteText: An Anatomical Study of the SAM3 Text Encoder for Efficient Vision-Language Segmentation (Zeng et al., arXiv 2602.12173) into the 🤗 Transformers API so you can load it next to any other Sam3Model checkpoint.

- Same API surface —

Sam3Processor/Sam3Modelunchanged. Tokenizer and 256-dim output embeddings match the teacher, so SAM-3's frozen fusion modules accept the new encoder with zero glue code. - Three student variants: MobileCLIP-S0, MobileCLIP-S1, MobileCLIP2-L — trading size against quality.

- Open source: code at SimonZeng7108/efficientsam3 (branch

sam3_litetext).

Why it matters

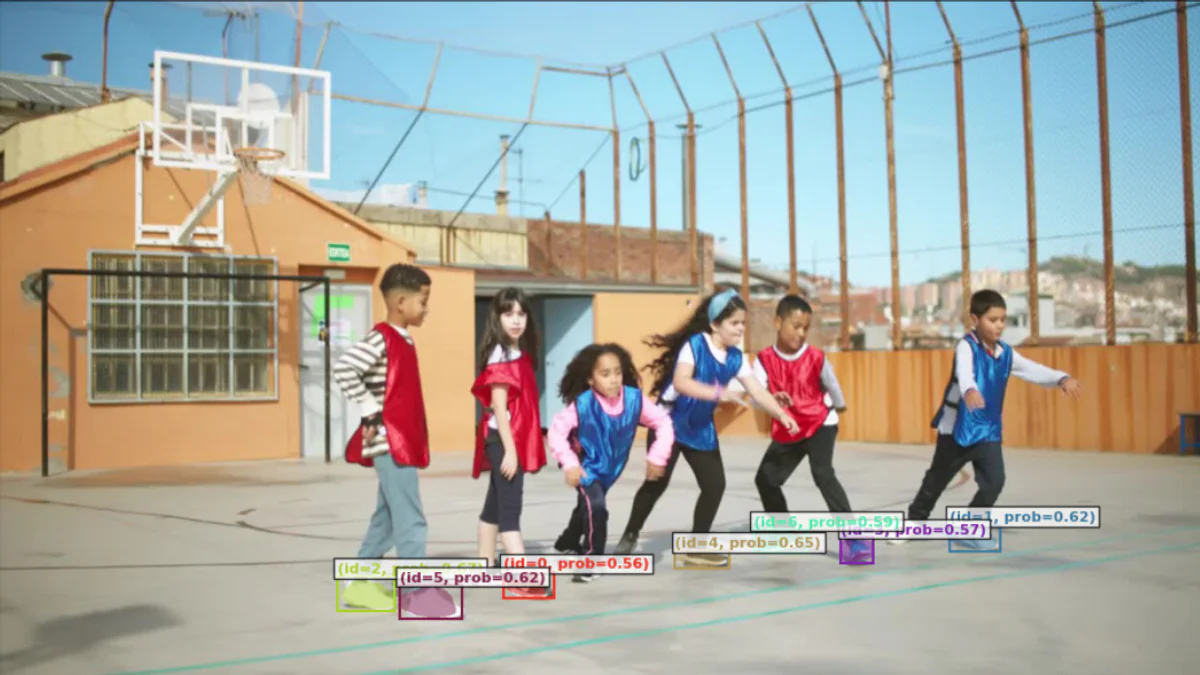

SAM-3 introduced Promptable Concept Segmentation — give it a noun phrase like "yellow school bus" and it returns masks for every matching instance across an image or video. It doubled prior-system accuracy on this task. The catch: a 353.72M-param CLIP text encoder is a non-starter for phones, AR glasses, or any edge device where VRAM is measured in hundreds of MB, not GB.

Lite-Text is what moves SAM-3 from "cloud-only demo" to "something you can realistically ship on-device." It also lowers the cost of running SAM-3 at scale on servers — a smaller text encoder means fewer GPU seconds per request and higher throughput per GPU. For teams doing batch annotation of massive image/video corpora, the savings compound quickly.

And because the integration landed directly in 🤗 Transformers, you don't need to clone a research repo or wire up custom loading code. Swap the checkpoint name, keep the rest of your pipeline — processors, tokenizers, inference loops — untouched.

Technical facts

The authors analysed 404,796 real segmentation prompts across SAM-3 benchmarks and found severe redundancy: most context windows are underutilized, vocabulary is sparse, and text embeddings occupy a low-dimensional manifold despite high nominal dimensionality. That's the permission slip to compress aggressively.

| Component | SAM-3 (original) | SAM-3 Lite-Text (MobileCLIP-S0) |

|---|---|---|

| Text encoder | CLIP ViT-L/14 | MobileCLIP-S0 student |

| Parameters | 353.72M | 42.54M |

| Reduction | — | 87.96% (~88%) |

| Output embedding | 256-dim | 256-dim |

| Tokenizer | CLIP ViT-L/14 | same |

| Fusion modules | trained | frozen, reused |

Distillation data: a 1% subset of Recap-DataComp-1B for the text encoder. EfficientSAM3 also ships compressed image backbones (RepViT, TinyViT, EfficientViT, as small as 680K params) if you want to shrink both sides of the model.

The three student variants trade size for quality: MobileCLIP-S0 is the smallest and fastest, MobileCLIP-S1 sits in the middle, and MobileCLIP2-L is the closest to the teacher's quality at ~123.8M params — still roughly one-third of the original. Pick whichever hits your latency and VRAM budget.

Comparison

Across image and video vision-language segmentation benchmarks, the MobileCLIP students deliver comparable metrics to the CLIP ViT-L/14 teacher. Exact numbers are per-benchmark in the paper, but the headline is: you keep SAM-3's quality while the text-encoder weights shrink by an order of magnitude. For on-device inference that is the difference between "won't fit" and "ships."

Use cases

- Mobile & AR: real-time text-prompted segmentation on phones and headsets.

- Robotics: concept-driven scene parsing where a robot hears a noun phrase and segments matching objects.

- Creator tools: on-device photo/video editing ("select the red car") without a round-trip to a GPU server.

- Research: faster iteration on SAM-3 pipelines when you don't need the full 700MB+ of text-encoder weights loaded.

Limitations & pricing

- Only the text encoder is compressed in this release — the image encoder stays full-size unless you layer in EfficientSAM3's image backbones.

- "Comparable" is not "identical" — expect small regressions on the hardest prompts.

- Paper is under CC BY-NC-ND 4.0 (non-commercial, no-derivs). Check the repo's own LICENSE for code reuse terms before production use.

- ONNX / CoreML exports for mobile are in development, not yet shipped.

What's next

EfficientSAM3 is a three-stage curriculum: Stage 1 (encoder distillation) weights landed in Dec 2025, fine-tuned variants in Jan 2026. Stages 2 and 3 — temporal memory compression and end-to-end fine-tuning — are still upcoming, and will likely push edge performance further. For now, if you're working with SAM-3 in Transformers, switching to the Lite-Text checkpoint is basically a free win.

Watch for ONNX and CoreML exports next; those will unlock "run SAM-3 entirely on a phone" demos that haven't been practical until now. The Hugging Face integration also makes it easy for the community to benchmark and fine-tune the Lite-Text students on domain-specific prompts — medical imaging, satellite imagery, industrial inspection — where a smaller, task-tuned text encoder often beats a bigger general-purpose one.

Nguồn: Niels Rogge / X, arXiv 2602.12173, EfficientSAM3, Hugging Face Transformers docs.