- Anthropic moved built-in memory for Claude Managed Agents out of research preview and into public beta.

- Agents can now persist what they learn across sessions through a client-side file directory — unlocking multi-session coding, meeting prep, and personalized bots without rebuilding state every time.

TL;DR

On April 23, 2026, Anthropic promoted built-in memory for Claude Managed Agents from research preview to public beta. Agents can now create, read, update and delete files in a persistent /memories directory, so they remember what they learned last session instead of starting cold. Memory is client-side — you control the storage backend — and is eligible for Zero Data Retention.

What's new

Managed Agents launched in public beta on April 8, 2026, but memory stayed gated as a research preview behind an access request. With today's announcement, memory is default-on for every API account that includes the managed-agents-2026-04-01 beta header.

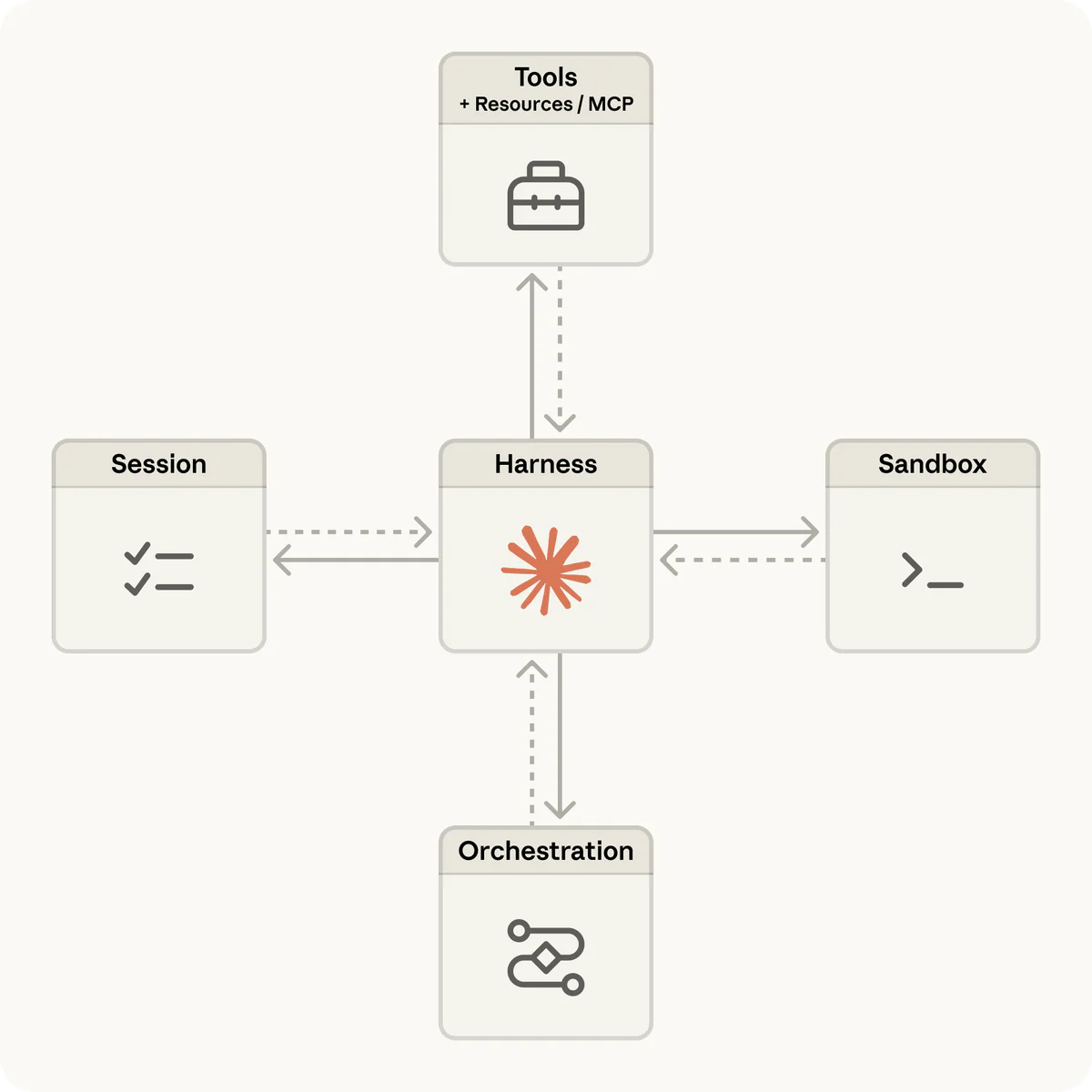

The underlying memory tool itself first went to beta on September 29, 2025 on the Claude Developer Platform. What is new in April 2026 is that the managed harness — the same harness that handles sandboxing, checkpointing, prompt caching and compaction — now ships memory as a first-class primitive alongside every other agent feature.

Why it matters

The old way to give an agent "memory" was brute force: dump everything potentially relevant into the context window at the start of the session. For long-running work that approach collapses — the window fills up, token cost explodes, and the agent starts losing thread.

Memory is the key primitive for just-in-time context retrieval. The agent stores what it learns as files and pulls them back only when needed. The active window stays focused on what matters right now; everything else waits on disk until the next tool call needs it. Combined with compaction (server-side summarization of old turns), a single project can now span hours or days without the harness forgetting the plan.

Technical facts

- Client-side, file-based: Claude calls six commands on

/memories—view,create,str_replace,insert,delete,rename. Your application executes them locally against any backend you like (filesystem, DB, encrypted blob, S3). - +10 points task success: In Anthropic's internal testing on structured file generation, Managed Agents improved outcome task success by up to 10 points over a standard prompt-and-response loop, with the largest gains on the hardest problems.

- ZDR eligible: because the data never leaves your infrastructure, the feature qualifies for Zero Data Retention for organizations on a ZDR contract.

- Pairs with compaction: compaction summarizes old conversation on the server; memory persists critical facts across those summarization boundaries so nothing gets lost in the rollup.

- Hard limits: files over 999,999 lines return an error. Path traversal protection (reject

../,..\,%2e%2e%2f) is your responsibility as the backend operator.

Comparison

Most competitor memory layers (OpenAI's ChatGPT memory, mem0, etc.) run server-side — the provider manages embeddings or summaries and exposes a recall API. Anthropic's bet is the opposite: the memory lives on your disk, in files you can inspect and version, driven by simple commands the model already understands from text-editor tools.

| Approach | Storage | Control | Compliance |

|---|---|---|---|

| Provider-managed memory (OpenAI, mem0) | Vendor-hosted embeddings / summaries | Opaque | Vendor-dependent |

| Claude memory tool | Client-side files in /memories | Full — you pick backend | ZDR eligible |

| Custom RAG pipeline | Your vector DB | Full, but you build retrieval | Your problem |

Trade-off: you own the backend and the security surface. The upside is auditability and data residency — both non-negotiable for enterprise buyers.

Use cases

- Multi-session coding: an initializer session writes a progress log and feature checklist into memory; every subsequent session opens by reading those files and recovers state in seconds. Sentry paired Seer, their debugging agent, with a Claude Managed Agent that writes the patch and opens the PR — shipped in weeks instead of months.

- Knowledge work inside Notion, Slack, Asana: agents join a project, pick up tasks, deliver spreadsheets, slides and apps. Notion is rolling this out in Custom Agents private alpha; Rakuten deploys a new specialist agent per week across engineering, sales, marketing and finance.

- Meeting prep (3x faster to build): agent researches every participant before a call using memory + web search + calendar/contact MCP connectors.

- Personalized consumer apps: a barista bot that remembers "the usual" across visits. The same pattern powers support bots, tutors and coaches that actually remember the user between sessions.

Limitations & pricing

Pricing is two-part and consumption-based:

- Session runtime: $0.08 per session-hour, metered to the millisecond. The meter pauses while the agent is idle waiting for user input or a tool confirmation — so a 20-minute wait costs nothing.

- Tokens: standard API rates. Opus 4.6 is $5 input / $25 output per million tokens, Sonnet 4.6 is $3 / $15. Prompt caching is built in (cache read = 0.1x input).

- Web search inside a session: $10 per 1,000 calls.

Known trade-offs:

- The 50% Batch API discount does not apply to Managed Agents sessions. If batch savings are load-bearing for your workload, stick with the Messages API.

- Hidden token overhead: every bash, file read and web fetch feeds tokens back into the context. A tool-heavy agent can burn tokens that dwarf the $0.08 runtime fee.

- Memory files are capped at 999,999 lines. Path-traversal hardening is on you.

- Only available via the Claude API directly — Bedrock / Vertex / Foundry billing does not cover Managed Agents yet.

- Rate limits: 300 create/min, 600 read/min per organization.

What's next

Two adjacent capabilities remain gated in research preview:

- Outcomes — you define success criteria, the agent self-evaluates and iterates until it gets there. This is the piece that turns Managed Agents from "better prompt loop" into autonomous work.

- Multi-agent coordination — a primary agent spins up and directs sub-agents to parallelize complex workflows. Cost implications are still unclear because each sub-agent carries its own runtime meter.

Beta-era pricing is not guaranteed to survive GA. If you are building production dependencies on Managed Agents, assume the $0.08/session-hour number and the exact billing model may shift when the beta label comes off.

Sources: Anthropic — Claude Managed Agents, Claude API Docs — Memory tool, Claude API Docs — Managed Agents overview, WaveSpeedAI — pricing breakdown, Leonie Monigatti — exploring the memory tool.