- LlamaIndex released ParseBench — 2,078 enterprise pages, 169,011 rules, 14 parsers tested across 5 dimensions.

- LlamaParse Agentic leads at 84.88%; GPT-5 Mini and Haiku 4.5 collapse on visual grounding.

- Why text-similarity OCR benchmarks are obsolete for the agent era.

TL;DR

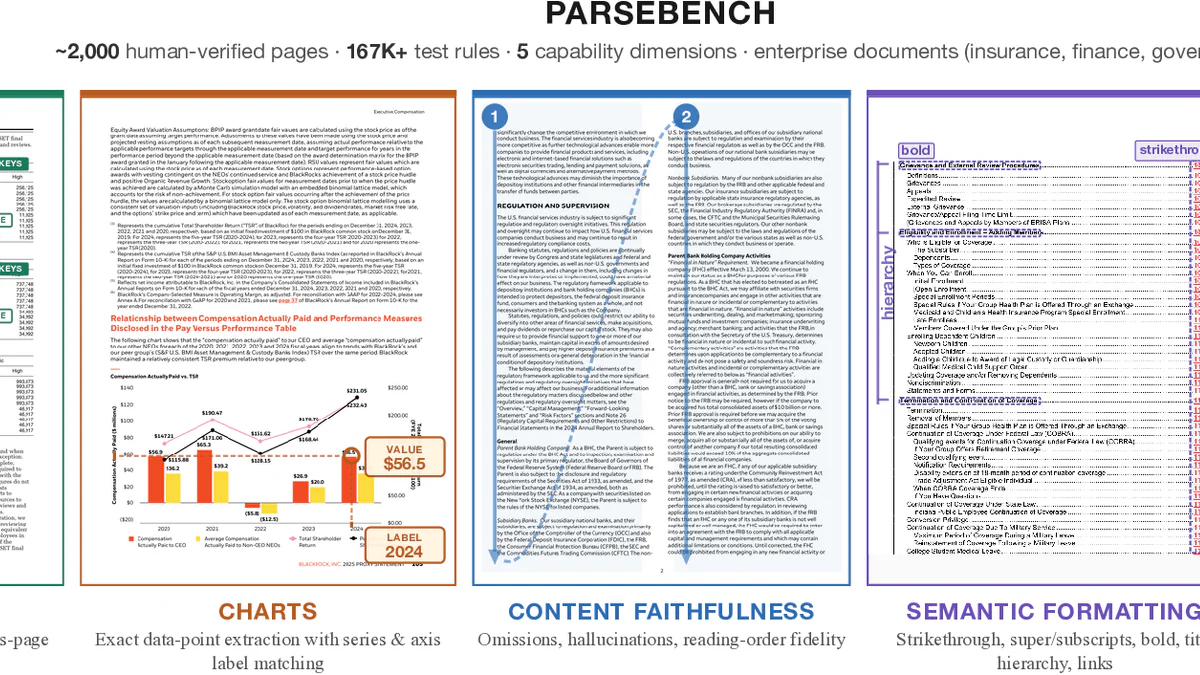

LlamaIndex just released ParseBench, the first document parsing benchmark designed for AI agents — not for humans reading PDFs. It tests 14 parsers on 2,078 enterprise pages with 169,011 rules across 5 dimensions: tables, charts, content faithfulness, semantic formatting, and visual grounding. Headline: LlamaParse Agentic leads at 84.88%; Gemini 3 Flash is the best external VLM at 71.0%; GPT-5 Mini and Anthropic Haiku 4.5 collapse on visual grounding (<10%). The benchmark is open-source (Apache-2.0) and available on HuggingFace, GitHub, and arXiv.

What's new

The bar for OCR has shifted. As LlamaIndex puts it: from "good enough for a human to read" to "reliable enough for an agent to act on." Existing benchmarks like OmniDocBench, OCRBench v2, and olmOCR-Bench rely on text-similarity metrics (BLEU, edit distance) that miss agent-critical failures — a transposed table header, a chart reduced to raw OCR text, a strikethrough silently dropped. ParseBench introduces what the team calls semantic correctness: does the parsed output preserve enough structure and meaning for correct downstream decisions?

The benchmark covers ~2,000 human-verified pages from real enterprise documents — insurance (SERFF filings), financial reports, government submissions — stratified across 5 capability dimensions:

- Tables — structural fidelity for merged cells, hierarchical headers, cross-page continuity.

- Charts — exact data-point extraction with correct labels from bar/line/pie/compound charts.

- Content Faithfulness — omissions, hallucinations, and reading-order violations.

- Semantic Formatting — strikethrough, super/subscript, bold, hyperlinks (formatting that carries meaning).

- Visual Grounding — every extracted element traceable back to its source location for auditability.

Why it matters

In agentic workflows, small parsing errors become decision errors. An insurance agent approving a claim reads a specific cell in a coverage table — if the header is misaligned, it reads the wrong column. A financial analyst agent quoting a price might quote a struck-through (invalidated) price as the current one. These failures don't show up in BLEU scores, but they break production.

"What matters is not whether a parser produces text that looks similar to a reference, but whether it preserves the structure and meaning needed for correct downstream decisions."

Most prior benchmarks miss the mark on enterprise content. OmniDocBench draws only 6% of pages from enterprise sources; olmOCR-Bench skews 42% toward arXiv math papers. ParseBench is the first to score all 5 dimensions on the documents that actually drive automation revenue.

Technical facts

| Dimension | Pages | Docs | Rules | Metric |

|---|---|---|---|---|

| Tables | 503 | 284 | — | GTRM (GriTS + TableRecordMatch) |

| Charts | 568 | 99 | 4,864 | ChartDataPointMatch |

| Content Faithfulness | 506 | 506 | 141,322 | Content Faithfulness Score |

| Semantic Formatting | 476 | 476 | 5,997 | Semantic Formatting Score |

| Visual Grounding | 500 | 321 | 16,325 | Element Pass Rate |

| Total (unique) | 2,078 | 1,211 | 169,011 | — |

Two new metrics matter: TableRecordMatch treats a table as a bag of records (insensitive to column/row order, brutal on transposed headers), and ChartDataPointMatch verifies annotated data points in the parser's output table — tolerant of formatting differences (currency, units, separators) but unforgiving on missing values.

Comparison: the leaderboard

| Method | Overall | Tables | Charts | Content Faith. | Format | Visual Ground. |

|---|---|---|---|---|---|---|

| LlamaParse Agentic | 84.88 | 90.74 | — | 89.68 | 85.24 | 80.62 |

| LlamaParse Cost Effective | 71.89 | — | — | — | 73.04 | — |

| Google Gemini 3 Flash | 71.0 | 89.9 | 64.8 | 86.2 | 58.4 | 56.0 |

| Reducto | 67.8 | 70.3 | 57.0 | 86.4 | 56.8 | 68.7 |

| Qwen 3 VL | 62.0 | 74.7 | 28.2 | 87.6 | 64.2 | 55.2 |

| Azure Doc Intelligence | 59.6 | 86.0 | 1.6 | 84.9 | 51.9 | 73.8 |

| Dots OCR 1.5 | 55.8 | 85.2 | 0.9 | 90.0 | 47.0 | 55.8 |

| Docling (OSS) | 50.6 | 66.4 | 52.8 | 66.9 | 1.0 | 66.1 |

| AWS Textract | 47.9 | 84.6 | 6.0 | 74.8 | 3.7 | 70.4 |

| OpenAI GPT-5 Mini | 46.8 | 69.8 | 30.1 | 82.3 | 45.8 | 6.2 |

| Anthropic Haiku 4.5 | 45.2 | 77.2 | 13.8 | 78.7 | 49.4 | 6.7 |

Three patterns jump out:

- Charts are the great divider. Only 4 methods crack 50%. Most specialized parsers score <6% — they output raw OCR text instead of structured data tables.

- Formatting is widely ignored. Range: Docling at 1.0% to LlamaParse Agentic at 85.24%. Most parsers strip strikethrough/superscripts as cosmetic.

- Visual grounding separates VLMs from layout-aware systems. GPT-5 Mini and Haiku 4.5 score under 8%; Azure (73.8%) and Textract (70.4%) crush them because they were built around layout detection.

Use cases

ParseBench is built for agent workflows in industries where parsing errors compound into financial or compliance risk:

- Insurance — claims approval reading specific table cells; SERFF regulatory filings with merged headers.

- Finance — due diligence, financial models, analyst pipelines parsing 10-K filings and earnings reports.

- Legal & contracts — strikethrough preservation matters (a struck-through clause is not the active clause).

- Government/regulatory — submissions where every value must be traceable to source for audit.

Limitations & pricing

The headline finding: no method is consistently strong across all 5 dimensions. Even on "mostly solved" content faithfulness, the ~90% top scores mean agents still hit omissions or hallucinations on 1 in 10 pages — unacceptable for high-stakes workflows.

On compute-vs-quality: throwing more thinking budget at VLMs gives diminishing returns. Gemini gains ~5 points moving from minimal to high thinking — at 4× the cost. GPT-5 Mini and Haiku 4.5 see even smaller gains at 3–4× cost. Reducto's agentic mode at ~5¢/page (the most expensive option) yields only ~4 points over its base.

LlamaParse pricing sits on the Pareto frontier:

- Agentic: ~1.2¢/page · 84.88% — outperforms all others at any cost level.

- Cost Effective: <0.4¢/page · 71.89% — competitive with Gemini at minimal thinking.

Availability: Apache-2.0 license. Dataset on HuggingFace (llamaindex/ParseBench, 592 MB, 169,011 rows). Code: run-llama/ParseBench with 90+ pre-configured pipelines. Paper: arXiv:2604.08538. Website: parsebench.ai.

What's next

The team flags an official public leaderboard "soon," plus three roadmap directions: greater scale and broader enterprise domain coverage; extending beyond parsing into structured extraction and document classification/splitting; and harder evaluation settings — ultra-high-resolution pages, visually dense technical documents, adversarial enterprise cases.

If you're building an agent that touches PDFs, ParseBench gives you the first honest answer to a question that's been impossible to benchmark properly: which parser won't silently corrupt your agent's context?

Sources: LlamaIndex blog, arXiv paper, GitHub repo, HuggingFace dataset.