- ClickHouse just shipped full Terraform + OpenAPI coverage for ClickPipes.

- CDC connectors (Postgres, MySQL, MongoDB), BigQuery, and Azure Blob Storage are now fully CRUD-manageable and importable into existing state.

- Here's what's inside v3.14.0 and why it matters for CI/CD-driven data teams.

TL;DR

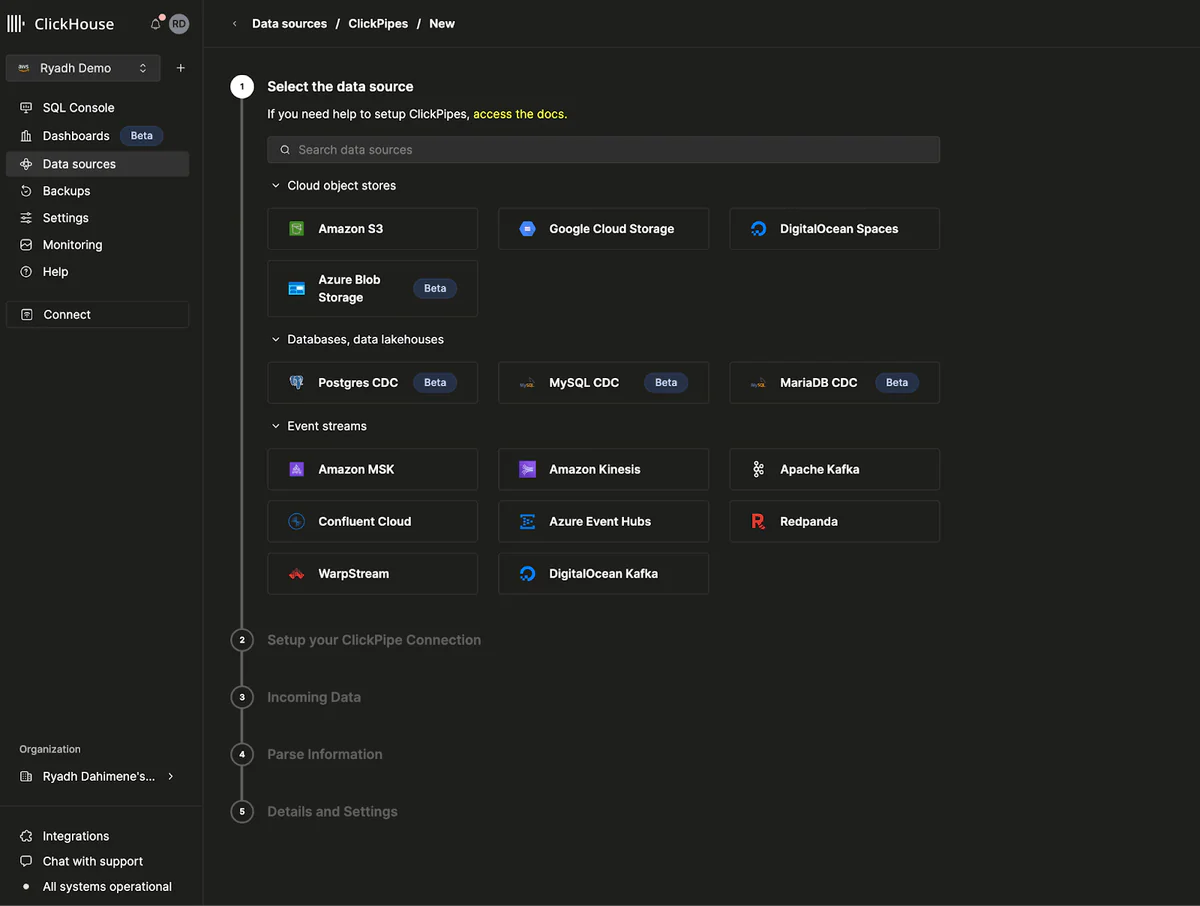

ClickHouse has officially promoted its ClickPipes Terraform provider and OpenAPI to General Availability. The latest ClickHouse/clickhouse provider v3.14.0 (3.6M+ installs) ships full CRUD for every ClickPipes source type — Postgres CDC, MySQL CDC, MongoDB CDC, BigQuery, Kafka, Kinesis, and S3/GCS/Azure Blob object storage — plus terraform import to pull existing pipelines into state without destroy/recreate.

If you've been clicking through the ClickHouse Cloud UI to stand up ingestion pipelines, you can now version, review, and deploy them like any other piece of infrastructure.

What's new

The announcement, shared on X and detailed in the Evolution of ClickPipes post, closes out an 11-month journey from 3.2.0-alpha1 (May 2025, Kafka + object storage only) to a stable GA with CDC connectors fully supported. The current provider docs expose eight resources, including:

clickhouse_clickpipe— the main pipeline resourceclickhouse_clickpipe_cdc_infrastructure— dedicated CDC infra primitiveclickhouse_clickpipes_reverse_private_endpoint— for PrivateLink-style ingressclickhouse_service,clickhouse_organization_settings, and private endpoint helpers

Every source type a ClickPipes user cares about is covered: Kafka (Confluent, MSK, Redpanda, Azure Event Hubs, WarpStream), object storage (S3, GCS, Azure Blob), Kinesis, BigQuery, and the three CDC sources — Postgres, MySQL, MongoDB.

Why it matters

Data pipelines have long been the last part of the analytics stack to resist Infrastructure-as-Code. UI-configured CDC jobs are notoriously fragile: no diffs, no review, no easy replay across staging and prod. With the Terraform provider at GA, a ClickPipes change now lives where every other infra change lives — a pull request, reviewed, planned, and applied.

Two concrete wins:

- Multi-environment consistency. The same module can spin up a sandbox pipeline with 1 replica and a production pipeline with 10 — with identical schema mappings and field definitions.

- Import existing pipelines. Teams who built their pipelines via the Cloud console don't have to rebuild them.

terraform import clickhouse_clickpipe.kafka_pipe <id>pulls state in cleanly.

Technical facts

Inside a clickhouse_clickpipe block, the scaling limits are explicit:

| Property | Range | Default |

|---|---|---|

replicas | 1–10 | 1 |

replica_cpu_millicores | 125–2000 | — |

replica_memory_gb | 0.5–8.0 | — |

Supported formats span the usual ClickHouse suspects: JSONEachRow, Avro, AvroConfluent, Protobuf, CSV, CSVWithNames, and Parquet.

A minimal Kafka pipe looks like this:

resource "clickhouse_clickpipe" "kafka_pipe" {

name = "orders-stream"

service_id = "e9465b4b-f7e5-4937-8e21-8d508b02843d"

state = "Running"

scaling { replicas = 2 }

source {

kafka {

type = "confluent"

format = "JSONEachRow"

brokers = "broker:9092"

topics = "orders"

credentials { username = "u"; password = "***" }

}

}

destination {

table = "orders"

managed_table = true

tableDefinition { engine { type = "MergeTree" } }

columns { name = "id"; type = "UInt64" }

columns { name = "total"; type = "Float64" }

}

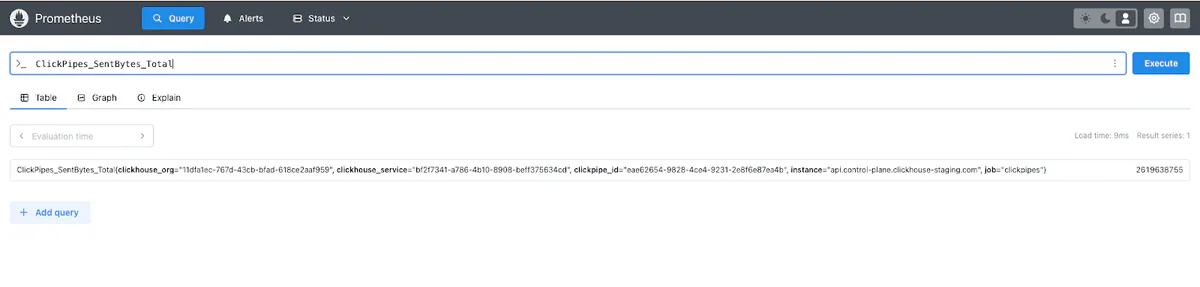

}Observability ships built-in: ClickPipes emits Prometheus metrics (ingestion bytes, rows, error counts) via the ClickHouse Cloud Prometheus endpoint, and Postgres CDC has its own Prometheus/OTEL endpoint that tracks replication slot growth and commit lag.

Comparison vs external ETL

ClickHouse's pitch for ClickPipes-over-external-ETL is largely about price:

| Connector | vs 3rd-party ETL | Status |

|---|---|---|

| Postgres CDC | ~5× cheaper | GA (metered since Sep 1, 2025) |

| Azure Blob Storage | 10–25× cheaper | GA |

| MongoDB CDC | Free during Beta | Public Beta |

| BigQuery | Uses free native export jobs | Private Preview |

Use cases

Two patterns have dominated adoption, per ClickHouse's Postgres CDC year-in-review:

- Real-time customer-facing analytics. Postgres stays the transactional system of record; ClickHouse powers dashboards that update in seconds. Ashby, Seemplicity, AutoNation, Vapi, and SpotOn are named customers. Over 400 companies now move 200TB+/month of Postgres data into ClickHouse via ClickPipes.

- BigQuery speed layer. Teams keep BigQuery for batch warehousing and bolt ClickHouse on top for sub-second interactive queries.

AI-native workloads are the acceleration story — the top 10 customers saw >1,000% data growth in six months, each adding 85TB+ to their pipelines.

Limitations & pricing

- Managed-table schema changes must happen outside Terraform.

- Changing a pipe's

source.typeforces full replacement (destroy + recreate). - Postgres CDC cross-cloud: GCP expansion underway, Azure planned.

- BigQuery connector is still Private Preview, full-table syncs only; incremental sync is on the roadmap.

What's next

Expect incremental BigQuery syncs, broader Postgres CDC cloud coverage (GCP + Azure), and eventual BYOC support via the PeerDB Helm chart foundation. The MongoDB CDC connector is expected to move from Public Beta to GA, at which point metered pricing will kick in (free today).

For anyone still managing ClickPipes through the console, the GA milestone is a clean cut-over point — terraform import once, codify replicas and schema mappings, wire the plan into your existing CI/CD, and review every future pipeline change as a PR. The “who touched the ingestion config at 2 a.m.?” question finally has a git log answer.

If you're coming from Debezium, Fivetran, or home-grown CDC, the cost math alone (5–25× cheaper for the biggest connectors) is hard to argue with — and you no longer pay that price in ergonomics. Postgres, MySQL, MongoDB, BigQuery, Azure Blob — all of them are now first-class Terraform resources with full CRUD, import, and Prometheus observability out of the box.

Sources: ClickHouse blog, Terraform Registry, Postgres CDC GA, Azure Blob GA.